Why Most Nonprofits Are Getting Agentic AI Wrong

92 percent of nonprofits use AI. Only 1.2 percent deploy AI agents. The gap between adoption and impact is growing, and most organizations are skipping the foundational work that makes agentic AI useful.

By Justin

Every CRM vendor in the nonprofit space is now selling "agentic AI." The pitch sounds compelling. Autonomous systems that research donors, draft outreach, optimize campaigns, and free your team to focus on relationships. Bonterra launched Que. Salesforce expanded Agentforce for Nonprofits. Smaller platforms are racing to bolt agent capabilities onto their existing tools.

Here is the problem. Most nonprofits adopting these tools are skipping the foundational work that makes agentic AI useful. And the gap between enthusiasm and results is growing fast.

A 2026 study by Virtuous and Fundraising.AI surveyed 346 nonprofits and found that while 92 percent now use AI in some form, only 7 percent report major improvements in organizational capability. Even more telling: just 1.2 percent are actually deploying AI agents. The rest are using generative AI for content drafting, email subject lines, and basic research. Helpful, but nowhere near the transformative potential the industry keeps promising.

The question is not whether agentic AI matters. It does. The question is why so many organizations are investing in it without the infrastructure to make it work.

What Agentic AI Actually Means

Traditional AI in fundraising is reactive. You ask a system to score donors and it scores them. You prompt it to write a thank-you email and it writes one. You run a report and it surfaces patterns. The human decides what to do next.

Agentic AI is different. An AI agent perceives its environment, reasons about what action to take, executes that action autonomously, and evaluates the result. It operates in a loop. Observe, plan, act, learn. When Bonterra describes Que analyzing millions of donor interactions to generate campaign strategies, or when Salesforce talks about Agentforce automating donor research and propensity scoring, they are describing this loop.

The distinction matters because it changes what your organization needs to be ready for. Reactive AI needs clean prompts and a human in the loop. Agentic AI needs clean data, clear guardrails, defined workflows, and organizational trust in automated decisions. Those are very different levels of operational maturity.

Most nonprofits are not there yet. That is not a criticism. It is a diagnosis.

The Readiness Gap

The data tells a clear story about where nonprofits actually stand with AI.

Eighty-one percent of nonprofit staff using AI are doing so individually, without shared workflows or organizational coordination. Nearly half have no AI governance policy. Sixty percent say they lack the in-house expertise to evaluate AI tools effectively. Only 4 percent have dedicated budget for AI-specific training.

This is the readiness gap. Organizations have adopted AI at the individual level. A development officer using ChatGPT to draft appeal letters. A marketing coordinator generating social media copy. But they have not built the organizational scaffolding that agentic AI requires.

Think about what an AI agent needs to function well in your fundraising operation. It needs access to accurate, unified donor records. It needs permission structures that define what it can and cannot do autonomously. It needs integration with your CRM, your email platform, your event management system, and your financial reporting. It needs someone accountable for monitoring its outputs and correcting its mistakes.

Without these foundations, deploying an AI agent is like hiring a new staff member and giving them no onboarding, no access to your systems, and no supervision. They might be brilliant. They will produce inconsistent work that nobody trusts.

The Unified Data Problem

The single biggest barrier to effective agentic AI in nonprofits is not the technology. It is the data.

When industry experts talk about creating a unified donor profile in 2026, they are describing something most nonprofits do not have. A single, comprehensive view of each supporter that combines giving history, engagement signals, event attendance, volunteer activity, communication preferences, and wealth indicators into one record.

Without unified data, your AI agent has digital amnesia. It can see a donor's last gift amount but not their event attendance pattern. It can pull their email open rate but not their volunteer history. It makes recommendations based on fragments rather than the full picture.

This is not theoretical. We evaluate nonprofit technology stacks regularly. The pattern is consistent. Organizations running three to five disconnected systems for donations, events, email, and volunteer management. Data lives in silos. Merge and purge processes are manual and infrequent. Donor records have duplicates, missing fields, and outdated contact information.

Layering agentic AI on top of fragmented data does not create intelligence. It creates confident-sounding mistakes at scale. An agent that recommends a major gift ask based on incomplete data does not just waste your time. It damages the donor relationship.

The Governance Question Nobody Wants to Answer

Here is the uncomfortable conversation most AI vendors skip. Who is accountable when an AI agent makes a bad decision?

If your agent sends an automated outreach sequence to a donor who recently lost a spouse, using an upbeat tone that ignores the circumstances, who owns that failure? If it reclassifies a major donor prospect based on outdated wealth data, causing your team to deprioritize the relationship, who catches that? If it generates a grant report with inaccurate program data because the underlying records were stale, who reviews it before it goes out?

Agentic AI makes decisions faster than humans. That is the point. But speed without governance creates risk. And for nonprofits, organizations built on trust, relationships, and community credibility, the downside of an AI mistake is not just inefficiency. It is reputational damage.

The 47 percent of nonprofits with no AI governance policy are not just behind on a compliance checkbox. They are deploying autonomous tools without a framework for accountability.

A practical governance framework for nonprofit AI agents should answer five questions. What decisions can the agent make without human approval? What triggers a human review before the agent acts? Who monitors agent outputs on a regular cadence? How do you audit agent decisions after the fact? What is the escalation path when something goes wrong?

If you cannot answer those questions today, you are not ready for agentic AI. You might be ready for AI-assisted workflows with a human in the loop. There is nothing wrong with that. It is a smarter starting point than most organizations realize.

What Actually Works: The Signal-Assist-Agent Framework

Rather than jumping straight to autonomous AI agents, we recommend nonprofits follow a staged approach that builds capability over time.

Stage One: Signal. Use AI to surface insights from your existing data. Predictive donor scoring. Lapse risk identification. Giving pattern analysis. The AI identifies signals. Your team decides what to do with them. This stage requires clean data and a functioning CRM, but it does not require autonomous decision-making. Most nonprofits should spend six to twelve months here, using the time to clean up their data infrastructure and build confidence in AI-generated insights.

Stage Two: Assist. Move to AI-assisted workflows where the system drafts recommendations and your team approves them. The AI drafts a stewardship sequence for at-risk donors. Your development officer reviews and sends it. The AI suggests optimal ask amounts based on capacity data. Your major gifts team validates the recommendation before acting. This stage introduces automation but keeps humans in the decision loop. It also builds the governance muscle you need for Stage Three.

Stage Three: Agent. Deploy fully autonomous AI agents for well-defined, bounded tasks where the risk of error is low and the cost of human intervention is high. Automated thank-you sequences triggered by gift data. Routine donor research compilation for prospect briefings. Data hygiene tasks like duplicate detection and address verification. Expand the agent's autonomy gradually, always with monitoring, always with clear escalation paths.

The organizations seeing real results from AI in fundraising are not the ones deploying the most advanced tools. They are the ones who built the foundation first. Unified data. Clear governance. Trained staff. Incrementally expanding automation. The 20 to 30 percent donation increases that early adopters report come from this disciplined approach, not from flipping a switch.

Where Agents Are Already Delivering

Despite the caution above, there are specific areas where agentic AI is already producing measurable results for nonprofits that have done the preparation work.

Automated donor research is the clearest near-term win. An AI agent that continuously scans public records, news sources, and wealth indicators to compile prospect briefings saves development officers hours of manual work each week. The task is bounded. The agent researches and compiles. A human reviews the briefing before acting on it. Low risk, immediate time savings.

Data hygiene is another strong use case. AI agents that continuously scan your donor database for duplicate records, outdated addresses, deceased indicators, and inconsistent formatting can maintain data quality at a level that manual processes cannot match. These agents operate on your internal data with clear rules. Low risk, high impact.

Post-gift stewardship sequencing is emerging as a third area. An agent that monitors gift transactions and triggers personalized thank-you sequences, adjusting timing, channel, and message based on gift size, donor history, and engagement patterns, can dramatically improve the stewardship experience without requiring your team to manage every touchpoint manually.

The common thread across these successful implementations is restraint. The agents handle specific, well-defined tasks with clear boundaries and human oversight. They are not making strategic decisions about donor relationships. They are handling operational work that frees your team to focus on the relational work that humans do best.

What to Ask Before You Buy

If a vendor is pitching you agentic AI capabilities right now, here is what to ask before you sign anything.

Where does the AI access your data, and does it create a unified donor profile or operate on top of your existing fragmented systems? If it is the latter, the agent's recommendations will only be as good as its worst data source.

What can the agent do autonomously versus what requires human approval? If the vendor cannot give you a clear, specific answer, their product likely has not been designed with nonprofit-appropriate guardrails.

How will you monitor and audit agent decisions? Dashboard reporting is not enough. You need the ability to review individual decisions, understand why the agent made them, and override them when necessary.

What training and change management support comes with the product? A tool is only as effective as the team using it. If the implementation plan does not include staff training and workflow redesign, you are buying software, not capability.

What happens to your data? Agentic AI systems often require broad access to your donor records, communication history, and financial data. Understand where that data goes, how it is stored, who else can access it, and what happens if you leave the platform.

The Bottom Line

Agentic AI will transform nonprofit fundraising. The trajectory is clear. Autonomous systems that handle donor research, optimize outreach timing, personalize engagement at scale, and flag relationship risks before they become losses will become standard tools for well-run development operations.

But the organizations that benefit most will not be the earliest adopters. They will be the best-prepared ones. Clean your data first. Build governance frameworks second. Train your teams third. Deploy agents fourth. That sequence outperforms skipping to Step Four because a vendor demo looked impressive.

Start with signals. Build toward assistance. Earn your way to agents.

That is not caution. That is strategy.

Related Solutions

Get the AI Team Playbook

10 practical AI tools your team can start using today — automations, custom GPTs, AI agents, and prompt frameworks that actually save time.

Want to put this into practice?

Book a 30-minute call. We'll talk through how this applies to your business and where the biggest opportunities are.

Book a Discovery CallRelated Insights

Thought Leadership

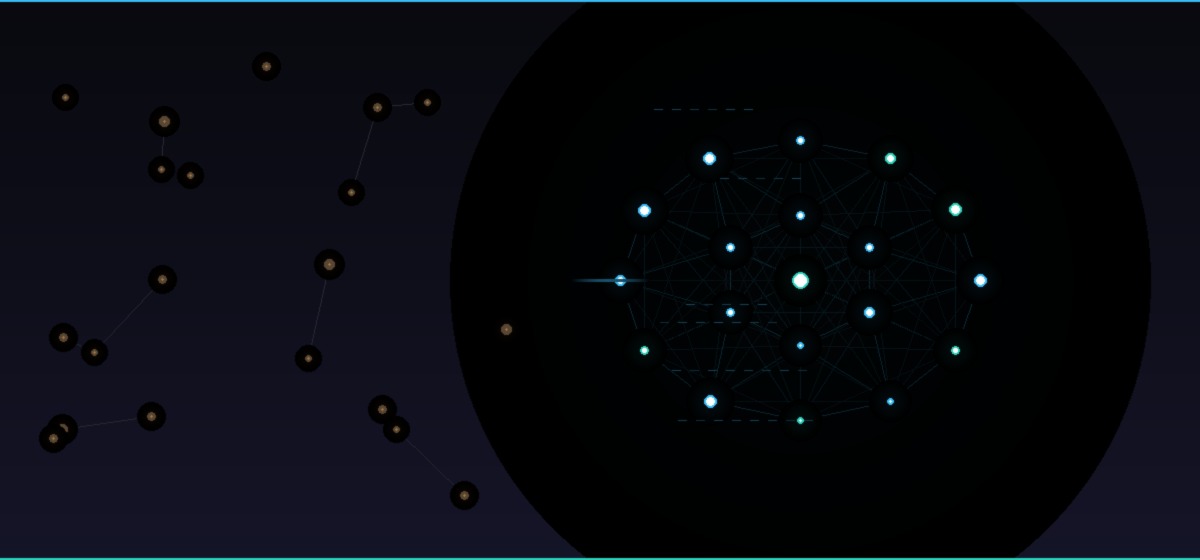

We Built a Swarm Before We Sold One

Queen City AI built its own agent swarm before offering to build anyone else's. Here is what we learned, and why we are ready to build yours.

Read insightThought Leadership

Queen City AI Launches Donorsignal.org

We launched DonorSignal.org to help nonprofits fix their fundraising infrastructure, clean up their systems, and get time back for the work that matters.

Read insightThought Leadership

Where AI Actually Helps Boutique Property Management Firms

Boutique property management firms do not struggle because they lack strategy. They struggle when operational drag compounds quietly over time.

Read insight